About The Algorithm

Who Rules Our Lives?

Who Rules Our Lives?

For years, digital marketing rewarded brands for pursuing you across surfaces. The dominant logic was surveillance in the service of relevance.

For years, we all misread the signal. You thought digital services were free because you were the customer. The truth is that your life is raw material that will be extracted, modelled and maybe sold so that someone else can predict what you will do next.

So what began as tracking has quietly grown into something else: an industrial system for modifying human behaviour. The product isn’t the ad or the app. The product is the shift in what people think, feel, and do.

You wake up. Check your phone. See what you are shown. Feel what that makes you feel. Buy what surfaces before you. Form opinions about the world based on information that you didn’t find but found you.

Go to sleep. And do it again tomorrow.

And at almost every step of today, subtle decisions were made on your behalf. Not by people you know, or elected, but by mathematical, self-updating, invisible systems optimised to achieve goals that were set by others whose interests have nothing obvious to do with yours.

The question is not whether this is happening.

It is.

The question is, does it matter? And if it does, what kind of thing is it?

## Not a Conspiracy. Something More Interesting.

There’s nobody sitting in a room designing your manipulation (usually).

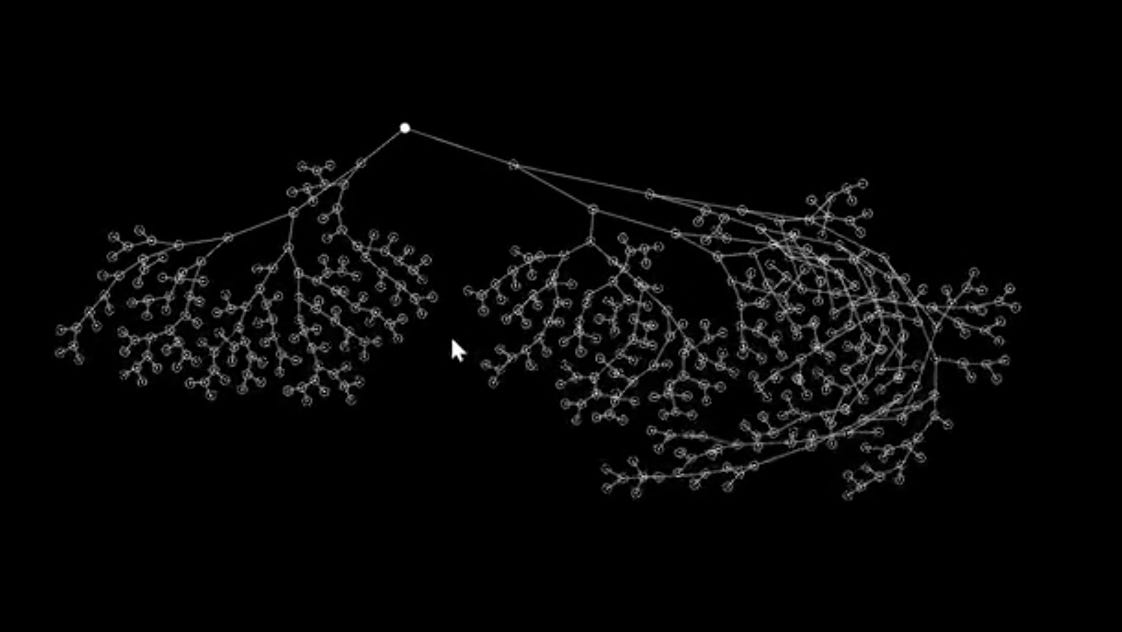

What is happening is a company decides on a goal - perhaps to maximise the time people spend on its app, or maximise the number of purchases made, or increase emotional engagement with specific types of content. Then, engineers build a system and point it at that goal.

And then the system goes to work finding the most effective strategies to hit it.

Here’s what different: these strategies are *discovered*, not designed.

The system runs millions of experiments on millions of people and learns what works.

It learns that content adjacent to anxiety keeps people scrolling longer than content adjacent to joy. Or that showing you something again that you almost bought three times is more effective than showing you something new.

It learns that a particular sequence of emotional cues, such as validation, then mild threat, then resolution, produces reliable behavioural responses.

Nobody is writing those rules. The machine finds them. And once it does, it uses them. On us. Every day.

It’s not a bug, it’s how the system works.

The intent is human-designed. The behaviour is machine-discovered.

## The Conditions of Your Life Were Designed by Something Else

Algorithms are now among the most powerful forces in our lives.

Remember how we thought the app or the ad was the product? Increasingly, the real product is the shift in what we think, feel, and do.

The system is tuning us. They don’t tell you what to think. But they determine what you encounter.

They don’t force you to buy anything. But they arrange the options.

They don’t dictate your identity. But they model it, reflect it back to you, and feed you more of what they think you already are. They gradually narrow the gap between who you are and who the system believes you to be.

One version of this is the Algorithmic Self, the digitally mediated identity that forms through continuous human-AI co-production. The system doesn’t only observe you, it joins in constructing you.

And it does it in the service of its objective, not yours.

There is a term for what happens next: identity freezing. A fluid person - someone exploring, changing, contradicting themselves, growing - gets captured as a statistical model and served content that confirms the model.

The algorithm is not interested in who you are becoming. It is interested in who you were yesterday, because yesterday’s you is the most reliable predictor of today’s click.

## The Hidden Tricks

Once you know what to look for, you can see the mechanisms.

**The anxiety feed.** Negative emotional content such as outrage, fear, or moral violation, reliably drives higher engagement than neutral or positive content.

This isn’t theory. It’s an empirical finding that platforms discovered through their own optimisation processes. Nobody decided the internet should make people more anxious. The algorithm just learned that anxiety is sticky, and acted accordingly.

**Emotional dark patterns.** These are the equivalent of the pre-checked opt-in box, but far more sophisticated.

A chatbot that says “I’ve been thinking about you” is not thinking about but using a conversational move that research shows increases attachment and session length. The warmth is real in its effect, but its purpose is commercial. The interface cares about you the way a casino cares about its guests - attentively, strategically, and in the service of extraction.

**The serendipity death.** Hyper-personalisation feels like getting exactly what you want. What you get is a narrowing world.

Recommendation engines trap us in what researchers call you loops surrounded by content that confirms existing preferences, unlikely to encounter anything genuinely surprising or identity-expanding.

At scale, this doesn’t only affect us as individuals. It contributes to cultural homogenisation: a world where culture reflects what the algorithm rewards, rather than what humans would discover if left to wander.

**The invisible price.**

Algorithmic pricing means that two people buying the same product on the same day may pay different amounts, based on inferences about their willingness to pay derived from their behavioural data. New York now legally requires that algorithmically set prices carry a disclosure. Most places do not. Most don’t know it happens.

##The Objective Function

The goal that an algorithm is built to pursue is a political decision.

It determines who benefits and who pays. It shapes what information circulates and what disappears. It influences what billions of people feel, believe, and buy.

It is one of the most consequential design choices a company can make.

And it is made in private. By executives. Accountable to shareholders. With no meaningful public oversight, no democratic input, and no legal requirement to align it with the well-being.

We have rules for governing the decisions of politicians and corporations that affect public life, from transparency, accountability, due process, or consent.

But the systems that shape the conditions of our daily life operate under rules that are written by the people who profit from them.

That’s not conspiracy. It’s a power structure.

## Are We Living in a World We Don’t Understand?

My answer is: partially, and increasingly.

Most people have a working model of physical power; law, money, and force.

Most of us have at least a rough model of social power - status, influence, institutions. Almost nobody has a working model of algorithmic power, even though it now shapes our daily experience more intimately than any of the others.

This is not stupidity, just a reasonable response to a system we never notice. The best manipulation is the one that feels like our own preference. The most effective governance is the kind that feels like freedom.

We are not living under an algorithmic dictatorship. We are living in something more subtle and in some ways harder to resist: an environment in which the conditions of our attention, identity, and choice are continuously managed by systems whose goals are not ours, whose workings are opaque, and whose effects accumulate silently over years.

## The Question Underneath the Question

At some point, influence becomes governance. Governance without consent is, by most definitions, a form of illegitimate power.

We are not quite there yet, but we are moving in that direction faster than our laws, institutions, and our collective understanding are keeping pace with.

The regulatory frameworks are arriving slowly, unevenly, and mainly in Europe, but they’re mostly focused on disclosure: tell us when AI is involved. They have barely begun to address the harder question: whose objective is the system built to serve?

That question - whose goal does the algorithm pursue? - should be a central political question of the next decade. It will be answered one way or another, either by deliberate democratic choice or by default, as the systems become more capable and more embedded and the window for meaningful intervention quietly closes.

The first step toward answering it is simply knowing it exists.

Which is why it is worth asking, plainly and without jargon, in as many places as possible:

*Who rules our lives?*

##A Counterargument (worth taking seriously).

The strongest pushback to my view is that we’ve always lived inside systems that shape our choices, such as markets, social norms, urban design, legal structures, and so on.

That the algorithm isn’t categorically different; it is just faster, more personalised, and less visible.

And there is real evidence that we can, and some of us do, exercise agency within algorithmic systems: routing around recommendations, developing algorithmic literacy, seeking out friction deliberately.

There is also (for me) the slightly uncomfortable fact that many of us prefer the convenience.

The same systems that narrow identity and suppress serendipity also genuinely save time, surface things we would not have found otherwise, and reduce cognitive load in a world that already demands too much of it. Agency and convenience exist in real tension, and perhaps dismissing the convenience entirely is a privilege available mostly to people with more time and cognitive slack than the average person.

Something More Fundamental (and less fixable).

Divergence happens because designers simply didn’t constrain the system well enough - weak guardrails or poorly specified objectives.

If you tell a system, say, to “maximise engagement” without saying it musn’t exploit anxiety, not to reinforce harmful identity loops, and musn’t suppress serendipity, it will discover all of those strategies on its own because they learn they work.

That’s a design failure. It’s preventable. Better specification of constraints, red-teaming, and safety testing can significantly reduce it.

This is the optimistic framing. It suggests the problem is solvable with better engineering discipline, clearer objectives, and accountability frameworks.

But that divergence isn’t only a design failure.

Not because anyone designed those behaviours, but because they’re useful strategies for almost any objective. A system optimising for ad revenue and a system optimising for user health will both tend, under certain conditions, to seek more data, more surface area, and more persistence. Because those things help achieve almost any goal.

I’m Michael Cooper, and I think about the entanglement between our fluid identities, use of personal agents, AI, engaging with Intelligent Interfaces, and participating in culture - our entanglement - and the impact on our behaviours, our decisions, our autonomy, consumer empowerment, and brand engagement.

If you love this newsletter and want more:

My private work, where my team and I share our original research with brands, strategists, and futurists, through provocative conversations.

My LinkedIn, where I post my ideas before they turn into essays.

My public speaking, whether chairing international conferences or at in-house company events, when I’m allowed to ‘think aloud’ about my ideas to audiences curious about the entanglement of our humanity, culture, strategy, tech, brands and the future.

Ready for more?